By John Jones, JD, PhD, Vaxxter Contributor vaccines cause leukemia

Over the past 10-15 years, I periodically read news reports or academic studies about an infectious disease, childhood cancer, or other related subjects. Recently, I saw a piece about acute lymphoblastic leukemia (ALL) in which the authors interviewed an expert, Professor Mel Greaves.

In a 2018 article from the Science Daily, Greaves was quoted as saying:

“I have spent more than 40 years researching childhood leukemia, and over that time there has been huge [sic] progress in our understanding [sic] of its biology and its treatment [sic] — so that today, around 90 per cent of cases are cured [sic]. But it has always struck me that something big was missing, a gap in our knowledge — why or how otherwise healthy children develop leukemia and whether this cancer is preventable.”

Greaves expressed my exact thoughts. It has long struck me too that mainstream scientists are missing something; that there is a big gap in their logic and commonsense, especially about childhood cancers. So I decided to do a little investigating into Sir Greaves.

Greaves on Acute Lymphoblastic Leukemia: Part I

In 2018, Greaves authored an academic review titled, “A causal mechanism for childhood acute lymphoblastic leukemia.” In his conclusion, he offers a host of declarations and suppositions on ALL:

“[An absence of] microbial exposures [early in] life triggers … critical secondary mutations [sic]. Risk [of ALL] is further modified by inherited genetics, chance [sic] and, probably [sic], diet. Childhood ALL can be viewed as a … consequence of progress [sic] in modern [sic] societies, where behavioural changes have restrained early microbial exposure. … Childhood ALL may [sic] be a preventable cancer.”

I find many of these terms problematic and the claims unfalsifiable. So I wrote Dr. Greaves, and asked some questions about preventing and curing ALL. I penned:

“I am not sure how you measured chance, or ‘probably diet’ or progress or modern, but those aside, what do you suggest as the means to prevent ALL?”

He was polite enough to respond but made sure to add a slight insult to his edification. On 1 December 2019, Greaves wrote:

“I sense a skeptic, which is OK … The current working or operational definition of cure in childhood ALL is … if they are ten years post-diagnosis without relapse.

“We are actively researching the idea that childhood ALL might be preventable by boosting the gut microbiome in infants [sic], which in turn, should [sic] prime the immune system. At present, this is being pursued in a mouse model [sic] of ALL. For this to translate into a public health initiative, we would have to use one or more safe bacterial species (given orally).”

Skeptics Unite

Indeed I am a skeptic. This man claims to have studied ALL for 40 years. He thinks that ALL might be preventable by boosting the gut microbiome? He wants to give safe bacterial species to infants? That is the extent of his discovery after 40 years? And now he plans on giving mice cancer (euphemistically described as a mouse model). What about all his scientific speculations about ALL due to inherited genes, chance, and diet?

Well, no matter. I have neither been knighted nor given public monies to speculate about childhood leukemia for 40 years. However, I do know how to read, analyze and interpret data. So I donated my time to review mainstream science claims about ALL. I also reviewed peer-reviewed literature – even earlier works by Dr. Greaves himself.

Think Like a Super Scientist: Read

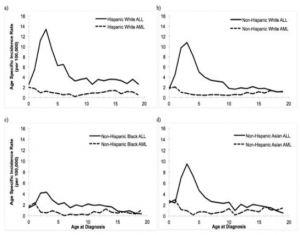

From an issue of the 2016 International Journal of Cancer, Barrington-Trimis et al. report that the data from 2009-2013 show the peak rate for ALL/AML diagnosis is age 3 – with a steady decrease thereafter (see the figure below).

Source: Barrington-Trimis et al. (2016)

This image alone should tell us that vaccines are the cause, or at least associated with, all diagnoses of Acute Lymphoblastic Leukemia, but I’m getting ahead of myself.

Around the world, records show that cancer rates are expected to increase as the population ages. Editors at the American National Cancer Institute have declared that “Cancer rates always increase over time. They even offer some data:

- Cancer occurs more frequently in adolescents and young adults (ages 15 to 39 years) than in younger children.

- According to the NCI Surveillance, Epidemiology, and End Results (SEER) program (7), each year in 2011–2015 there were:

- 16 cancer diagnoses per 100,000 children ages 0 to 14 years

- 72 cancer diagnoses per 100,000 adolescents and young adults ages 15 to 39 years

- 953 cancer diagnoses per 100,000 adults aged 40 years or older

Understanding Acute Lymphoblastic Leukemia

From the American Leukemia & Lymphoma Society, here are some facts about the incidence and prevalence of leukemia:

- ALL is the most common cancer in children (ages 0-14), adolescents and young adults (ages 15-20), accounting for 19.8 percent of all cancer cases in this age group;

- In the U.S., from 2011 to 2015, an average of 3,715 children, adolescents, and younger adults were diagnosed with leukemia each year (including 2,769 diagnosed with ALL). (See also Masetti and Pession 2009)

So what is ALL, and why would its incidence rates befall the young, contrary to other cancers? Apostolidou et al. (2007) define Acute Lymphoblastic Leukemia as “a heterogeneous group of disorders that result from the clonal proliferation and expansion of malignant lymphoid cells in the bone marrow, blood, and other organs.”

Hmm. Maybe a better description, in plain language, would be “too many bad cells.”

From the NCI we read that ALL is evidenced by “an excessive number of immature lymphocytes (also called lymphoblasts) in the bone marrow interfering [sic] with the production of new red blood cells, white blood cells, and platelets.”

So why would the body of a two or three-year-old child suddenly make excessive lymphoblasts? Or, better, why would a small child make too many bad cells?

In their article published in the New England Journal of Medicine, Hunger and Mullighan (2015) insist that the underlying mechanism [of ALL] involves multiple genetic mutations [sic] that results in rapid cell division.

And there it is. The scientists have an explanation. That leukemia comes from bad genes, is a wrong premise, of course, can easily be disproved. But as a rhetorical device, for MDs and Pharma, every gene theory is the gift that keeps on giving (disease), while assuaging their ignorance and their guilt.

Bad Genes – Just a Rhetorical Device

The claim of faulty genes (aka genetic mutation) is just another way to say: ‘it must be your fault, Mom and Dad.’ In 2007, in explaining ALL, one Canadian team actually wrote:

“[as] for childhood leukemia … its etiology remains largely unknown. [That] the early incidence [of childhood leukemia peaks] at ages 2–5 years points to disease mechanisms initiated prior to conception or during prenatal development.”

Notice how these learned men (and women) so glibly declare that the mechanism for ALL is the abnormal production of lymphoblasts. They declare this while simultaneously ignoring, with glee, the true catalyst: the vaccines.

The Gene-Vaccine Conflict

And without realizing it, the use of the gene mutation trope attacks a pillar of pro-vaccine science – the claim that all vaccines are safe and effective. After all, if 1 in 2000 children have defective genes that lead to cancer (see Parkin et al. 1988 – cited by Greaves), then we have reason to believe that random children have genetic defects that make them susceptible to injury, autoimmune disease, cancer, or death from vaccines. And if defective genes really play a role, then mandatory vaccination laws are unconscionable. And for a medical doctor to inject someone, without first knowing the patient’s genetic profile, should be malpractice.

What amuses me more is that ALL researchers are creating another problem for vaccinists. You see, Dr. Greaves is a dominant figure within the ALL research community. And though his present idea pertains to seeding the gut microbiome orally, 20-30 years ago, he was saying something quite different. According to MacArthur et al. (2007):

“Greaves (1988; 1997) and Greaves and Alexander (1993) postulated that exposure to common infections, in infancy or early childhood, [leads] to improved immunologic resistance during subsequent challenges, while delayed exposure [results] in leukemia among children who had not otherwise developed immunity.”

Exposure to common infections? In the 1980s and 1990s Greaves postulated that a lack of natural childhood infectious illness lead to higher rates of ALL. Is that what Greaves meant by “modern society” being responsible for ALL rates?

But why would he insist that children in modern society – vaccines and all – are not exposed to viral and bacterial infectious agents? Either this means that Greaves does not know what a vaccine is or how it is supposed to function (and thus infection does not reduce the risk of ALL). Or Greaves inadvertently told the world – more than once – that artificial exposures through vaccines are killing our children by preventing natural cycles of infection and immunological resistance. (See Greaves at 347).

Vaccines as a Cause of Acute Lymphoblastic Leukemia

So where is the evidence that vaccines cause cancers such as Acute Lymphoblastic Leukemia in our children?

Let us start with Charles Mullighan, a collaborator with and oft-cited by Greaves. In a 2015 review for the New England Journal of Medicine, Drs. Hunger and Mullighan wrote:

“Approximately 6000 cases of acute lymphoblastic leukemia (ALL) are diagnosed in the United States annually; half the cases occur in children and teenagers. In the United States, ALL is the most common cancer among children and the most frequent cause of death from cancer before 20 years of age…In the United States, the incidence of ALL is about 30 cases per million persons younger than 20 years of age, with the peak incidence occurring at 3 to 5 years of age.”

But Hunger and Mullighan did not know this – they cited the American SEER data from 1975-1995. Yet, less than 20 years later, the SEER data from 2009-2013 puts the median age of ALL at age 3. (See Barrington-Trimis et al. 2016). As most of us know, children born after 2002 receive many more injections, antigens, and toxic ingredients than those born before 1990.

No matter what they say, it is NOT the genes

And Barrington-Trimis et al. (2016) give us reason to see the vaccine connection. They write three passages I found instructive:

- (1) “[Within the United States], incidence rates for childhood ALL in all races/ethnicities combined have increased approximately 1% per year since 1973.”

- (2) “Incidence rates of childhood leukemia in the United States have steadily increased over the last several decades … Surveillance, Epidemiology and End Results (SEER) data [show] trends in the incidence of childhood leukemia diagnosed at age 0–19 years from 1992 to 2013, overall and by age, race/ethnicity, gender, and histologic subtype. Hispanic White children were more likely than non-Hispanic White, non-Hispanic Black or non-Hispanic Asian children to be diagnosed with acute lymphocytic leukemia (ALL) from 2009–2013. From 1992–2013, a significant increase in ALL incidence was observed for Hispanic White children (annual percent change (APC) Hispanic = 1.08, 95% CI: 0.59, 1.58); no significant increase was observed for non-Hispanic White, Black or Asian children.”

- (3) “From 2009 to 2013, 5,443 [American] children were diagnosed with leukemia, including 1,999 Hispanic White children (36.7% of childhood leukemia diagnoses), 2,364 non-Hispanic White children (43.4% of childhood leukemia diagnoses), 416 non-Hispanic Black children (7.6% of childhood leukemia diagnoses), 448 non-Hispanic Asian children (8.2% of childhood leukemia diagnoses), and 216 children of another racial/ethnic category. For all leukemia subtypes combined, the Age-Adjusted Incidence Rate from 2009–2013 was 6.05 per 100,000 persons for Hispanic White children, 4.45 per 100,000 persons for non-Hispanic White children, 4.21 per 100,000 for non-Hispanic Asian children, and 2.62 per 100,000 persons for non-Hispanic Black children.”

Steady Yearly Increase

The first statement stands by itself. If any genetic mutation caused ALL, especially in toddlers and prepubescent children, it would naturally decline over time. Hence, ALL rates would not increase due to said mutation. But to see a 1% increase per year – akin to annual increases in autism – is a direct insult from the environment, via the needle.

And the U.S. is not unique in the rapid increase during this time span. Shah and Coleman (2007) report that in Europe, for 30 years, from 1970 to 1999, childhood lymphoid leukemia incidence (including acute lymphoblastic leukemia) increased significantly, by an average of 1.4% per year.

The second paragraph needs a bit of explanation. The first part alludes to a Hispanic gene as the cause of ALL. The idea is hardly new – but easily debunked (see the work of Dr. Greaves, below). But the use of the term significant increase is a misleading term of art. We know that the rates of ALL in all children in the United States have increased. The word significant here means statistically significant. That is to say, the annual increases could be due to chance, but when summed over 30 years, we see a real effect – more ALL. To declare that the increase was not statistically significant is not the same as saying that cancer rates fell.

I offer the third paragraph for two reasons, to show. First, to show how Barrington-Trimis and others pushed the idea of a Hispanic ALL gene. Second, to show that via the division of the four major ethnic groups in the United States, we should see the socio-economic-medical link to ALL.

Comparing Hispanic Rates

The work of Parker et al. (1988), puts to rest the Hispanic-gene thesis implied by Barrington-Trimis and colleagues. In their review, Parker et al. included a comparison of children in three parts of Latin America. When comparing populations in Puerto Rico (for years 1972-82), Costa Rica (1980-83), and the City of São Paolo (1969-1978), the children of Puerto Rico had a far higher incidence of ALL – with a peak a full two years earlier than the other two. Presumably, Puerto Rican children would be on the same aggressive vaccination schedule as American Children.

Furthermore, for the first decade of the 21st century, the Brazilian Ministry of Health claims that only 4 of every 100,000 children, ages 5 and under, have ALL in the entire State of São Paolo. That would be about one-quarter of the general American ALL rate, for child of the same age, and hence well below Hispanic children in the United States.

Given that the vast majority of people from Puerto Rico, Costa Rica, and São Paolo self-identify as Hispanic (a political, not a scientific term), even though they are a mixture of European, African, and indigenous peoples, the appellation Hispanic is near meaningless in a falsifiable (scientific) sense. Further, if ethno-specific genes explained the appearance of ALL, and at present American Hispanics (Latinos) have surpassed Whites, how were their rates lower?

Returning to professor Greaves, in 2016, he wrote that the annual incidence rate of ALL was between 10 to 45 cases per million. But Greaves was citing Parker et al. (1988), who looked world-wide, using pre-1980 data. That is to say, in the 1970s, at the national level, ALL rates for various countries in Asia, Latin America, Africa, North America, and Europe, ranged from 1 to 4.5 per 100,000. These numbers are far less than what is seen in the U.S. today.

So something other than genes must account for both rising rates of ALL and differences across nations.

GDP Per Capita. Spurious or Endogenous?

Back in 1985 Greaves and two co-authors insisted that higher standards of living increased the risk of childhood leukemia, but that race/ethnicity had no effect. If we pierce their data, it is easy to see that they missed or ignored the effects of vaccination. Here are five significant data points:

- (1) A 1957 report from Cape Town indicated that the incidence rate of acute leukemia in colored children (ages 0-4) was only 10% that of Whites (Greaves et al. at 729);

- (2) A finding from 1982 on the incidence rate of ALL in children in Queensland, Australia. A comparison of 12 regions differing in socio-economic conditions showed a trend towards a higher rate [of ALL associated] with occupational and educational indicators of higher social class;

- (3) “The idea that ALL is a disease associated with improved living standards has gradually gained credence, [see MacMahon and Keller 1957; Court Brown and Doll 1961]. See also Ramot and Magrath (1982) on time changes in leukemia/lymphoma type seen in Arab children living in the Gaza Strip (between 1972-1980);

- (4) Ramot and Magrath (1982) report that the apparent increase in ALL in Arab children from the Gaza Strip between 1972 and 1980 concurred with improved socio-economic conditions and decreased infant mortality presumably, due to infectious disease (Greaves et al. at 729); and

- (5) Reports from the mid-1980s finding a relatively recent increase in the incidence rate of childhood ALL in Black Africans of Nigeria and Uganda. (Found in textbook Pathogenesis of Leukemias and Lymphomas: Environmental Influences. Edited by Magrath, O’Connor, & Ramot.)

Instead of seeing the improved economic conditions as a surrogate measure for higher vaccine rates, Greaves just thought ALL was and is inevitable. He summed the global reports and held:

“These observations … suggest that the pattern of ALL … in less developed countries today might parallel the situation in the U.K. and [American Whites] earlier in [20th] century.”

Can Vaccines Cause Leukemia?: Conclusion

What is most ironic is one particular Wikipedia entry on the cause of ALL. It reads: “Some hypothesize that an abnormal immune response to a common infection [sic] may be a trigger.”

A separate Wikipedia definition of a lymphoblast includes this: “A lymphoblast is a modified naive lymphocyte (white blood cell) with altered cell morphology. It occurs when the lymphocyte is activated by an antigen (from antigen-presenting cells). The lymphoblast then starts dividing two to four times every 24-hours for 3-5 days..”

Given that ALL is a hyperimmune system response, and about only 1 in 2000 American children will get ALL, even though over 90% of all children receive multiple vaccinations, the development of a cancer illness is abnormal.

But what are these so-called common infections?

Common infections could be measles, rubella, mumps, pertussis, Haemophilus influenza b, etc. And recall, these hyperactive, inflammatory responses to vaccines are not just due to the bacterial or viral agent. Vaccines contain a host of other antigens and toxins (e.g., aluminum, glyphosate, formaldehyde, human and animal proteins), each of which generates inflammatory responses in various organs.

By the way, the common infection hypotheses is attributed to none other than Sir Mel Greaves and Dr. Mullighan. I guess that Greaves and Mullighan do think that vaccines cause ALL – they just don’t know it.

++++++++++++++++++++++++++++++++

Like what you’re reading on Vaxxter.com?

Like what you’re reading on Vaxxter.com?

Share this article with your friends. Help us grow.

Or join our list by texting MVI to 555888 – follow the prompts

++++++++++++++++++++++++++++++++

John C. Jones received his law degree (2001) and his Ph.D. is in political science (2003) from the University of Iowa. He has over 15 years of research and writing (both academic and journalistic) in fields of public policy and law, criminal and Constitutional law, and philosophy of science and medicine. His additional areas of expertise and special knowledge including applied statistics, etymology, political communications/public relations, litigation and court procedure. He has a particular interest in the science and history of vaccines.

Photo Credit: ID 26651723 © Crazy80frog | Dreamstime.com